Meltdown: Why our systems fail and What we can do about it

Authors and related work

- Chris Clearfield

- Chris is the founder of System Logic, an independent research and consulting firm focusing on the challenges posed by risk and complexity. He previously worked as a derivatives trader at Jane Street, a quantitative trading firm, in New York, Tokyo, and Hong Kong, where he analyzed and devised mitigations for the financial and regulatory risks inherent in the business of technologically complex high-speed trading. He has written about catastrophic failure, technology, and finance for The Guardian, Forbes, the Harvard Kennedy School Review, the popular science magazine Nautilus, and the Harvard Business Review blog.

- András Tilcsik

- András holds the Canada Research Chair in Strategy, Organizations, and Society at the University of Toronto’s Rotman School of Management. He has been recognized as one of the world’s top forty business professors under forty and as one of thirty management thinkers most likely to shape the future of organizations. The United Nations named his course on organizational failure as the best course on disaster risk management in a business school.

- How to Prepare for a Crisis You Couldn’t Possibly Predict

- Over the past five years, we have studied dozens of unexpected crises in all sorts of organizations and interviewed a broad swath of people — executives, pilots, NASA engineers, Wall Street traders, accident investigators, doctors, and social scientists — who have discovered valuable lessons about how to prepare for the unexpected. Here are three of those lessons.

Overview

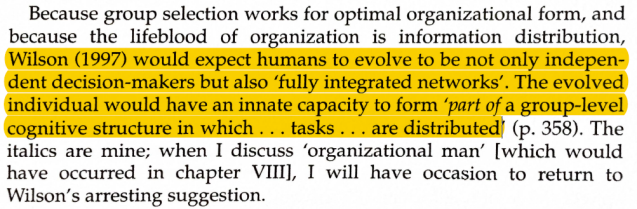

This book looks at the underlying reasons for accidents that emerge from complexity and how diversity is a fix. It’s based on Charles Perrow’s concept of Normal Accidents being a property of high-risk systems.

Normal Accidents are unpredictable, yet inevitable combinations of small failures that build upon each other within an unforgiving environment. Normal accidents include catastrophic failures such as reactor meltdowns, airplane crashes, and stock market collapses. Though each failure is unique, all these failures have common properties:

-

- The system’s components are tightly coupled. A change in one place has rapid consequences elsewhere

- The system is densely connected, so that the actions of one part affects many others

- The system’s internals are difficult to observe, so that failure can appear without warning

What happens in all these accidents is that there is misdirected progress in a direction that makes the problem worse. Often, this is because the humans in the system are too homogeneous. They all see the problem from the same perspective, and they all implicitly trust each other (Tight coupling and densely connected).

The addition of diversity is a way to solve this problem. Diversity does three things:

-

- It provides additional perspectives into the problem. This only works if there is large enough representation of diverse groups so that they do not succumb to social pressure.

- It lowers the amount of trust within the group, so that proposed solutions are exposed to a higher level of skepticism.

- It slows the process down, making the solution less reflexive and more thoughtful.

By designing systems to be transparent, loosely coupled and sparsely connected, the risk of catastrophe is reduced. If that’s not possible, ensure that the people involved in the system are diverse.

My more theoretical thoughts:

There are two factors that affect the response of the network: The level of connectivity and the stiffness of the links. When the nodes have a velocity component, then a sufficiently stiff network (either many somewhat stiff or a few very stiff links) has to move as a single entity. Nodes with sparse and slack connections are safe systems, but not responsive. Stiff, homogeneous (similarity is implicit stiff coupling) networks are prone to stampede. Think of a ball rolling down a hill as opposed to a lump of jello.

When all the nodes are pushing in the same direction, then the network as a whole will move into more dangerous belief spaces. That’s a stampede. When some percentage of these connections are slack connections to diverse nodes (e.g. moving in other directions), the structure as a whole is more resistant to stampede.

I think that dimension reduction is inevitable in a stiffening network. In physical systems, where the nodes have mass, a stiff structure really only has two degrees of freedom, its direction of travel and its axis of rotation. Which means that regardless of the number of initial dimensions, a stiff body’s motion reduces to two components. Looking at stampedes and panics, I’d say that this is true for behaviors as well, though causality could run in either direction. This is another reason that diversity helps keep away from dangerous conditions, but at the expense of efficiency.

Notes

- Such a collision should have been impossible. The entire Washington Metro system, made up of over one hundred miles of track, was wired to detect and control trains. When trains got too close to each other, they would automatically slow down. But that day, as Train 112 rounded a curve, another train sat stopped on the tracks ahead—present in the real world, but somehow invisible to the track sensors. Train 112 automatically accelerated; after all, the sensors showed that the track was clear. By the time the driver saw the stopped train and hit the emergency brake, the collision was inevitable. (Page 2)

- The second element of Perrow’s theory (of normal accidents) has to do with how much slack there is in a system. He borrowed a term from engineering: tight coupling. When a system is tightly coupled, there is little slack or buffer among its parts. The failure of one part can easily affect the others. Loose coupling means the opposite: there is a lot of slack among parts, so when one fails, the rest of the system can usually survive. (Page 25)

- Perrow called these meltdowns normal accidents. “A normal accident,” he wrote, “is where everyone tries very hard to play safe, but unexpected interaction of two or more failures (because of interactive complexity) causes a cascade of failures (because of tight coupling).” Such accidents are normal not in the sense of being frequent but in the sense of being natural and inevitable. “It is normal for us to die, but we only do it once,” he quipped. (Page 27)

- This is exactly what I see in my simulations and in modelling with graph Laplacians. There are two factors that affect the response of the network: The level of connectivity and the stiffness of the links. When the nodes have a velocity component, then a sufficiently stiff network (either many somewhat stiff or a few very stiff links) has to behave as a single entity.

- These were unintended interactions between the glitch in the content filter, Talbot’s photo, other Twitter users’ reactions, and the resulting media coverage. When the content filter broke, it increased tight coupling because the screen now pulled in any tweet automatically. And the news that Starbucks had a PR disaster in the making spread rapidly on Twitter—a tightly coupled system by design. (Page 30)

- This approach—reducing complexity and adding slack—helps us escape from the danger zone. It can be an effective solution, one we’ll explore later in this book. But in recent decades, the world has actually been moving in the opposite direction: many systems that were once far from the danger zone are now in the middle of it. (Page 33)

- Today, smartphone videos create complexity because they link things that weren’t always connected (Page 37)

- For nearly thirty minutes, Knight’s trading system had gone haywire and sent out hundreds of unintended orders per second in 140 stocks. Those very orders had caused the anomalies that John Mueller and traders across Wall Street saw on their screens. And because Knight’s mistake roiled the markets in such a visible way, traders could reverse engineer its positions. Knight was a poker player whose opponents knew exactly what cards it held, and it was already all in. For thirty minutes, the company had lost more than $15 million per minute. (Page 41)

- Though a small software glitch caused Knight’s failure, its roots lay much deeper. The previous decade of technological innovation on Wall Street created the perfect conditions for the meltdown. Regulation and technology transformed stock trading from a fragmented, inefficient, relationship-based activity to a tightly connected endeavor dominated by computers and algorithms. Firms like Knight, which once used floor traders and phones to execute trades, had to adapt to a new world. (Page 42)

- This is an important point. There is short-term survival value in becoming homogeneous and tightly connected. Diversity only helps in the long run.

- As the crew battled the blowout, complexity struck again. The rig’s elaborate emergency systems were just too overwhelming. There were as many as thirty buttons to control a single safety system, and a detailed emergency handbook described so many contingencies that it was hard to know which protocol to follow. When the accident began, the crew was frozen. The Horizon’s safety systems paralyzed them. (Page 49)

- I think that this may argue opposite to the authors’ point. The complexity here is a form of diversity. The safety system was a high-dimensional system that required an effective user to be aligned with it, like a free climber on a cliff face. A user highly educated in the system could probably have made it work, even better than a big STOP button. But expecting that user is a mistake. The authors actually discuss this later when they describe how safety training was reduced to simple practices that ignored perceived unlikely catastrophic events.

- “The real threat,” Greenberg explained, “comes from malicious actors that connect things together. They use a chain of bugs to jump from one system to the next until they achieve full code execution.” In other words, they exploit complexity: they use the connections in the system to move from the software that controls the radio and GPS to the computers that run the car itself. “As cars add more features,” Greenberg told us, “there are more opportunities for abuse.” And there will be more features: in driverless cars, computers will control everything, and some models might not even have a steering wheel or brake pedal. (Page 60)

- In this case it’s not the stiffness of the connections, its the density of connections

- Attacks on cars, ATMs, and cash registers aren’t accidents. But they, too, originate from the danger zone. Complex computer programs are more likely to have security flaws. Modern networks are rife with interconnections and unexpected interactions that attackers can exploit. And tight coupling means that once a hacker has a foothold, things progress swiftly and can’t easily be undone. In fact, in all sorts of areas, complexity creates opportunities for wrongdoing, and tight coupling amplifies the consequences. It’s not just hackers who exploit the danger zone to do wrong; it’s also executives at some of the world’s biggest companies. (Page 62)

- By the year 2000, Fastow and his predecessors had created over thirteen hundred specialized companies to use in these complicated deals. “Accounting rules and regulations and securities laws and regulation are vague,” Fastow later explained. “They’re complex. . . . What I did at Enron and what we tended to do as a company [was] to view that complexity, that vagueness . . . not as a problem, but as an opportunity.” Complexity was an opportunity. (Page 69)

- I’m not sure how to fit this in, but I think there is something here about high-dimensional spaces being essentially invisible. This is the same thing as the safety system on the Deepwater Horizon.

- But like the core of a nuclear power plant, the truth behind such writing is difficult to observe. And research shows that unobservability is a key ingredient to news fabrications. Compared to genuine articles, falsified stories are more likely to be filed from distant locations and to focus on topics that lend themselves to the use of secret sources, such as war and terrorism; they are rarely about big public events like baseball games. (Page 77)

- More heuristics for map building

- Charles Perrow once wrote that “safety systems are the biggest single source of catastrophic failure in complex, tightly coupled systems.” (Page 85)

- Dimensions reduce through use, which is a kind of conversation between the users and the designers. Safety systems are rarely used, so this conversation doesn’t happen.

- Perrow’s matrix is helpful even though it doesn’t tell us what exactly that “crazy failure” will look like. Simply knowing that a part of our system—or organization or project—is vulnerable helps us figure out if we need to reduce complexity and tight coupling and where we should concentrate our efforts. It’s a bit like wearing a seatbelt. The reason we buckle up isn’t that we have predicted the exact details of an impending accident and the injuries we’ll suffer. We wear seatbelts because we know that something unforeseeable might happen. We give ourselves a cushion of time when cooking an elaborate holiday dinner not because we know what will go wrong but because we know that something will. “You don’t need to predict it to prevent it,” Miller told us. “But you do need to treat complexity and coupling as key variables whenever you plan something or build something.” (Page 88)

- A fundamental feature of complex systems is that we can’t find all the problems by simply thinking about them. Complexity can cause such strange and rare interactions that it’s impossible to predict most of the error chains that will emerge. But before they fall apart, complex systems give off warning signs that reveal these interactions. The systems themselves give us clues as to how they might unravel. (Page 141)

- Over the course of several years, Rerup conducted an in-depth study of global pharmaceutical powerhouse Novo Nordisk, one of the world’s biggest insulin producers. In the early 1990s, Rerup found, it was difficult for anyone at Novo Nordisk to draw attention to even serious threats. “You had to convince your own boss, his boss, and his boss that this was an issue,” one senior vice president explained. “Then he had to convince his boss that it was a good idea to do things in a different way.” But, as in the childhood game of telephone—where a message gets more and more garbled as it passes between people—the issues became oversimplified as they worked their way up the chain of command. “What was written in the original version of the report . . . and which was an alarm bell for the specialist,” the CEO told Rerup, “was likely to be deleted in the version that senior management read.” (Page 146)

- Dimension reduction, leading to stampede

- Once an issue has been identified, the group brings together ad hoc teams from different departments and levels of seniority to dig into how it might affect their business and to figure out what they can do to prevent problems. The goal is to make sure that the company doesn’t ignore weak signs of brewing trouble. (Page 147)

- Environmental awareness as a deliberate counter to dimension reduction

- We show that a deviation from the group opinion is regarded by the brain as a punishment,” said the study’s lead author, Vasily Klucharev. And the error message combined with a dampened reward signal produces a brain impulse indicating that we should adjust our opinion to match the consensus. Interestingly, this process occurs even if there is no reason for us to expect any punishment from the group. As Klucharev put it, “This is likely an automatic process in which people form their own opinion, hear the group view, and then quickly shift their opinion to make it more compliant with the group view.” (Page 154)

- Reinforcement Learning Signal Predicts Social Conformity

- Vasily Klucharev

- We often change our decisions and judgments to conform with normative group behavior. However, the neural mechanisms of social conformity remain unclear. Here we show, using functional magnetic resonance imaging, that conformity is based on mechanisms that comply with principles of reinforcement learning. We found that individual judgments of facial attractiveness are adjusted in line with group opinion. Conflict with group opinion triggered a neuronal response in the rostral cingulate zone and the ventral striatum similar to the “prediction error” signal suggested by neuroscientific models of reinforcement learning. The amplitude of the conflict-related signal predicted subsequent conforming behavioral adjustments. Furthermore, the individual amplitude of the conflict-related signal in the ventral striatum correlated with differences in conforming behavior across subjects. These findings provide evidence that social group norms evoke conformity via learning mechanisms reflected in the activity of the rostral cingulate zone and ventral striatum.

- Reinforcement Learning Signal Predicts Social Conformity

- When people agreed with their peers’ incorrect answers, there was little change in activity in the areas associated with conscious decision-making. Instead, the regions devoted to vision and spatial perception lit up. It’s not that people were consciously lying to fit in. It seems that the prevailing opinion actually changed their perceptions. If everyone else said the two objects were different, a participant might have started to notice differences even if the objects were identical. Our tendency for conformity can literally change what we see. (Page 155)

- Gregory Berns

- Dr. Berns specializes in the use of brain imaging technologies to understand human – and now, canine – motivation and decision-making. He has received numerous grants from the National Institutes of Health, National Science Foundation, and the Department of Defense and has published over 70 peer-reviewed original research articles.

- Neurobiological Correlates of Social Conformity and Independence During Mental Rotation

- Background: When individual judgment conflicts with a group, the individual will often conform his judgment to that of the group. Conformity might arise at an executive level of decision making, or it might arise because the social setting alters the individual’s perception of the world.

- Methods: We used functional magnetic resonance imaging and a task of mental rotation in the context of peer pressure to investigate the neural basis of individualistic and conforming behavior in the face of wrong information.

- Results: Conformity was associated with functional changes in an occipital-parietal network, especially when the wrong information originated from other people. Independence was associated with increased amygdala and caudate activity, findings consistent with the assumptions of social norm theory about the behavioral saliency of standing alone.

- Conclusions: These findings provide the first biological evidence for the involvement of perceptual and emotional processes during social conformity.

- The Pain of Independence: Compared to behavioral research of conformity, comparatively little is known about the mechanisms of non-conformity, or independence. In one psychological framework, the group provides a normative influence on the individual. Depending on the particular situation, the group’s influence may be purely informational – providing information to an individual who is unsure of what to do. More interesting is the case in which the individual has definite opinions of what to do but conforms due to a normative influence of the group due to social reasons. In this model, normative influences are presumed to act through the aversiveness of being in a minority position

- A Neural Basis for Social Cooperation

- Cooperation based on reciprocal altruism has evolved in only a small number of species, yet it constitutes the core behavioral principle of human social life. The iterated Prisoner’s Dilemma Game has been used to model this form of cooperation. We used fMRI to scan 36 women as they played an iterated Prisoner’s Dilemma Game with another woman to investigate the neurobiological basis of cooperative social behavior. Mutual cooperation was associated with consistent activation in brain areas that have been linked with reward processing: nucleus accumbens, the caudate nucleus, ventromedial frontal/orbitofrontal cortex, and rostral anterior cingulate cortex. We propose that activation of this neural network positively reinforces reciprocal altruism, thereby motivating subjects to resist the temptation to selfishly accept but not reciprocate favors.

- Gregory Berns

- These results are alarming because dissent is a precious commodity in modern organizations. In a complex, tightly coupled system, it’s easy for people to miss important threats, and even seemingly small mistakes can have huge consequences. So speaking up when we notice a problem can make a big difference. (Page 155)

- KRAWCHECK: I think when you get diverse groups together who’ve got these different backgrounds, there’s more permission in the room—as opposed to, “I can’t believe I don’t understand this and I’d better not ask because I might lose my job.” There’s permission to say, “I come from someplace else, can you run that by me one more time?” And I definitely saw that happen. But as time went on, the management teams became less diverse. And in fact, the financial services industry went into the downturn white, male and middle aged. And it came out whiter, maler and middle-aged-er. (Page 176)

- “The diverse markets were much more accurate than the homogeneous markets,” said Evan Apfelbaum, an MIT professor and one of the study’s authors. “In homogeneous markets, if someone made a mistake, then others were more likely to copy it,” Apfelbaum told us. “In diverse groups, mistakes were much less likely to spread.” (Page 177)

- Having minority traders wasn’t valuable because they contributed unique perspectives. Minority traders helped markets because, as the researchers put it, “their mere presence changed the tenor of decision making among all traders.” In diverse markets, everyone was more skeptical. (Page 178)

- In diverse groups, we don’t trust each other’s judgment quite as much, and we call out the naked emperor. And that’s very valuable when dealing with a complex system. If small errors can be fatal, then giving others the benefit of the doubt when we think they are wrong is a recipe for disaster. Instead, we need to dig deeper and stay critical. Diversity helps us do that. (Page 180)

- Ironically, lab experiments show that while homogeneous groups do less well on complex tasks, they report feeling more confident about their decisions. They enjoy the tasks they do as a group and think they are doing well. (Page 182)

- Another stampede contribution

- The third issue was the lack of productive conflict. When amateur directors were just a small minority on a board, it was hard for them to challenge the experts. On a board with many bankers, one CEO told the researchers, “Everybody respects each other’s ego at that table, and at the end of the day, they won’t really call each other out.” (Page 193)

- Need to figure out what productive conflict is and how to measure it

- Diversity is like a speed bump. It’s a nuisance, but it snaps us out of our comfort zone and makes it hard to barrel ahead without thinking. It saves us from ourselves. (Page 197)

- A stranger is someone who is in a group but not of the group. Simmel’s archetypal stranger was the Jewish merchant in a medieval European town—someone who lived in the community but was different from the insiders. Someone close enough to understand the group, but at the same time, detached enough to have an outsider’s perspective. (Page 199)

- Can AI be trained to be a stranger?

- But Volkswagen didn’t just suffer from an authoritarian culture. As a corporate governance expert noted, “Volkswagen is well known for having a particularly poorly run and structured board: insular, inward-looking, and plagued with infighting.” On the firm’s twenty-member supervisory board, ten seats were reserved for Volkswagen workers, and the rest were split between senior managers and the company’s largest shareholders. Both Piëch and his wife, a former kindergarten teacher, sat on the board. There were no outsiders. This kind of insularity went well beyond the boardroom. As Milne put it, “Volkswagen is notoriously anti-outsider in terms of culture. Its leadership is very much homegrown.” And that leadership is grown in a strange place. Wolfsburg, where Volkswagen has its headquarters, is the ultimate company town. “It’s this incredibly peculiar place,” according to Milne. “It didn’t exist eighty years ago. It’s on a wind-swept plain between Hanover and Berlin. But it’s the richest town in Germany—thanks to Volkswagen. VW permeates everything. They’ve got their own butchers, they’ve got their own theme park; you don’t escape VW there. And everybody comes through this system.” (Page 209)

- Most companies have lots of people with different skills. The problem is, when you bring people together to work on the same problem, if all they have are those individual skills . . . it’s very hard for them to collaborate. What tends to happen is that each individual discipline represents its own point of view. It basically becomes a negotiation at the table as to whose point of view wins, and that’s when you get gray compromises where the best you can achieve is the lowest common denominator between all points of view. The results are never spectacular but, at best, average. (Page 236)

- The idea here is that there is either total consensus and groupthink, or grinding compromise. The authors are focussing too much on the ends of the spectrum. The environmentally aware, social middle is the sweet spot where flocking occurs.

- Or think about driverless cars. They will almost certainly be safer than human drivers. They’ll eliminate accidents due to fatigued, distracted, and drunk driving. And if they’re well engineered, they won’t make the silly mistakes that we make, like changing lanes while another car is in our blind spot. At the same time, they’ll be susceptible to meltdowns—brought on by hackers or by interactions in the system that engineers didn’t anticipate. (Page 242)

- We can design safer systems, make better decisions, notice warning signs, and learn from diverse, dissenting voices. Some of these solutions might seem obvious: Use structured tools when you face a tough decision. Learn from small failures to avoid big ones. Build diverse teams and listen to skeptics. And create systems with transparency and plenty of slack. (Page 242)

The number of renewable goods with which an economy is endowed is plotted against the number of pairs of symbol strings in the grammar, which captures the hypothetical “laws of substitutability and complementarity.” A curve separates a subcritical regime below the curve and a supracritical regime above the curve. As the diversity of renewable resources or the complexity of the grammar rules increases, the system explodes with a diversity of products. (Page 193)

The number of renewable goods with which an economy is endowed is plotted against the number of pairs of symbol strings in the grammar, which captures the hypothetical “laws of substitutability and complementarity.” A curve separates a subcritical regime below the curve and a supracritical regime above the curve. As the diversity of renewable resources or the complexity of the grammar rules increases, the system explodes with a diversity of products. (Page 193)

(pg 149)

(pg 149) (page 102)

(page 102) (pg 107)

(pg 107) (pg 107)

(pg 107)