Normal Accidents: Living with High-Risk Technologies (1999 ed)

Author

Charles Perrow (Scholar search): An organizational theorist, he is the author of The Radical Attack on Business, Organizational Analysis: A Sociological View, Complex Organizations: A Critical Essay, and Normal Accidents: Living with High Risk Technologies. His interests include the development of bureaucracy in the 19th Century; the radical movements of the 1960s; Marxian theories of industrialization and of contemporary crises; accidents in such high risk systems as nuclear plants, air transport, DNA research and chemical plants; protecting the nation’s critical infrastructure; the prospects for democratic work organizations; and the origins of U.S. capitalism.

Overview

This book describes a type of catastrophic accident that emerges in particular types of complex systems called, “Normal”, because they appear to be inevitable, yet unpredictable as to the specifics.

Normal accidents occur in systems that have three common characteristics

- They are tightly coupled. Behavior in one part rapidly affects other parts.

- They are densely connected. One part may affect many other parts

- Their process are opaque in that they cannot be observed directly and as such behavior must be inferred.

In addition, systems that involve the transformation of their input, such as nuclear power, petrochemical plants, and DNA manipulation are more likely to be tightly coupled, densely connected, and opaque and as such more prone to catastrophe.

A sub-theme is that these systems are always “accidents waiting to happen”, and as such an obvious cause can be found in a post-mortem. However, systems that were inspected months before an accident are often found to be in good working order. Looking more closely, the expectation of finding a problem after a catastrophe makes finding the problem more likely. Looking at a running system through the lens of an imaginary catastrophe should be an effective way to tease out potential problems.

My more theoretical thoughts

Like Meltdown, which was based in part on this book, the systems described here look like networks where the degree and stiffness of connections, combined with an overall velocity of the networked system through belief space (described here as production pressure). That implies that modelling them using something like Graph Laplacians might make sense, though the equations that describe the “weight” of the nodes, the “stiffness” of the edges, and the inertial characteristics of the system as a whole are unclear.

Notes

Introduction

- Rather, I will dwell upon characteristics of high-risk technologies that suggest that no matter how effective conventional safety devices are, there is a form of accident that is inevitable. (page 3)

- Most high-risk systems have some special characteristics, beyond their toxic or explosive or genetic dangers, that make accidents in them inevitable, even “normal.” This has to do with the way failures can interact and the way the system is tied together. It is possible to analyze these special characteristics and in doing so gain a much better understanding of why accidents occur in these systems, and why they always will. If we know that, then we are in a better position to argue that certain technologies should be abandoned, and others, which we cannot abandon because we have built much of our society around them, should be modified. (Page 4)

- No one dreamed that when X failed, Y would also be out of order and the two failures would interact so as to both start a fire and silence the fire alarm. (Page 4)

- This interacting tendency is a characteristic of a system, not of a part or an operator; we will call it the “interactive complexity” of the system. (Page 4)

- For some systems that have this kind of complexity, such as universities or research and development labs, the accident will not spread and be serious because there is a lot of slack available, and time to spare, and other ways to get things done. But suppose the system is also “tightly coupled,” that is, processes happen very fast and can’t be turned off, the failed parts cannot be isolated from other parts, or there is no other way to keep the production going safely. Then recovery from the initial disturbance is not possible; it will spread quickly and irretrievably for at least some time. Indeed, operator action or the safety systems may make it worse, since for a time it is not known what the problem really is. (Page 5)

- these systems require organizational structures that have large internal contradictions, and technological fixes that only increase interactive complexity and tighten the coupling; they become still more prone to certain kinds of accidents. (Page 5)

- If interactive complexity and tight coupling—system characteristics—inevitably will produce an accident, I believe we are justified in calling it a normal accident, or a system accident. The odd term normal accident is meant to signal that, given the system characteristics, multiple and unexpected interactions of failures are inevitable. (Page 5)

- The cause of the accident is to be found in the complexity of the system. That is, each of the failures—design, equipment, operators, procedures, or environment—was trivial by itself. Such failures are expected to occur since nothing is perfect, and we normally take little notice of them. (Page 7)

- Most of the time we don’t notice the inherent coupling in our world, because most of the time there are no failures, or the failures that occur do not interact. But all of a sudden, things that we did not realize could be linked (buses and generators, coffee and a loaned key) became linked. The system is suddenly more tightly coupled than we had realized. (Page 8)

- Comment: This is the central point in the stampeding of things like self-driving cars. The system is more tightly coupled that we realize

- It is normal in the sense that it is an inherent property of the system to occasionally experience this interaction. Three Mile Island was such a normal or system accident, and so were countless others that we shall examine in this book. We have such accidents because we have built an industrial society that has some parts, like industrial plants or military adventures, that have highly interactive and tightly coupled units. Unfortunately, some of these have high potential for catastrophic accidents. (Page 8)

- This dependence is known as tight coupling. On the other hand, events in a system can occur independently as we noted with the failure of the generator and forgetting the keys. These are loosely coupled events, because although at this time they were both involved in the same production sequence, one was not caused by the other. (Page 8)

- In complex industrial, space, and military systems, the normal accident generally (not always) means that the interactions are not only unexpected, but are incomprehensible for some critical period of time. (Page 8)

- But if, as we shall see time and time again, the operator is confronted by unexpected and usually mysterious interactions among failures, saying that he or she should have zigged instead of zagged is possible only after the fact. Before the accident no one could know what was going on and what should have been done. Sometimes the errors are bizarre. We will encounter “noncollision course collisions,” for example, where ships that were about to pass in the night suddenly turn and ram each other. But careful inquiry suggests that the mariners had quite reasonable explanations for their actions; it is just that the interaction of small failures led them to construct quite erroneous worlds in their minds, and in this case these conflicting images led to collision. (Page 9)

- Small beginnings all too often cause great events when the system uses a “transformation” process rather than an additive or fabricating one. Where chemical reactions, high temperature and pressure, or air, vapor, or water turbulence is involved, we cannot see what is going on or even, at times, understand the principles. In many transformation systems we generally know what works, but sometimes do not know why. These systems are particularly vulnerable to small failures that “propagate” unexpectedly, because of complexity and tight coupling. (Page 10)

- Comment: This is a surprisingly apt description of a deep neural network and a good explanation of why they are risky.

- High-risk systems have a double penalty: because normal accidents stem from the mysterious interaction of failures, those closest to the system, the operators, have to be able to take independent and sometimes quite creative action. But because these systems are so tightly coupled, control of operators must be centralized because there is little time to check everything out and be aware of what another part of the system is doing. An operator can’t just do her own thing; tight coupling means tightly prescribed steps and invariant sequences that cannot be changed. But systems cannot be both decentralized and centralized at the same time; (Page 10)

- Comment: And the pressures to make systems efficient leads to tighter coupling and regards diversity as a penalty, which it almost always is in the short term. Diversity prevents catastrophes, but inhibits rewards/profits.

- when we move away from the individual dam or mine and take into account the larger system in which they exist, we find the “eco-system accident,” an interaction of systems that were thought to be independent but are not because of the larger ecology. (Page 14)

- Comment: I think financial crashes may also be manifestations of an eco-system accident. Credit-default swaps are similar to DDT in that they concentrate risk and move it up the food chain.

Chapter 1: Normal Accident at Three Mile Island

- We are now, incredibly enough, only thirteen seconds into the “transient,” as engineers call it. (It is not a perversely optimistic term meaning something quite temporary or transient, but rather it means a rapid change in some parameter, in this case, temperature.) In these few seconds there was a false signal causing the condensate pumps to fail, two valves for emergency cooling out of position and the indicator obscured, a PORV that failed to reseat, and a failed indicator of its position. The operators could have been aware of none of these. (Page 21)

- Comment: This could be equivalent to a navigation system routing a fleet of cars through a recent disaster, where the road is lethal, but the information about that has not entered the system. (examples)

- What they didn’t know, and couldn’t know, was that with the PORV open and the two feedwater valves blocked, preventing the removal of residual heat, they already had a LOCA, but not from a pipe break. The rise in pressure in the pressurizer was probably due to the steam voids rapidly forming because the core was close to becoming uncovered. They thought they were avoiding a LOCA when they were in one and were making it worse. With the PORV stuck open, the danger of going solid in the pressurizer was reduced because the open valve would provide some relief. But no one knew it was open. (Page 26)

- We will encounter this man’s dilemma a few more times in this book; it goes to the core of a common organizational problem. In the face of uncertainty, we must, of course, make a judgment, even if only a tentative and temporary one. Making a judgment means we create a “mental model” or an expected universe. (Page 27)

- Comment: This is belief space, and these models are often created as stories with sequences. Having a headache can create a story of something temporary that will be fixed with a pill, or something serious that requires a trip to the hospital. The type of story that gets told depends very much on the location in the belief space the user is in. Someone who is worried about a stroke is much more likely to think that a headache is serious and build a narrative around that.

- Despite the fact that this is no proper test of the appropriateness of alternative B rather than A, it serves to “confirm” your decision. In so believing, you are actually creating a world that is congruent with your interpretation, even though it may be the wrong world. It may be too late before you find that out. (Page 27)

- These are not expected sequences in a production or safety system; they are multiple failures that interacted in an incomprehensible manner (Page 31)

- Comment: This could be incomprehensible for any intelligent system, not just human.

Chapter 2: Nuclear Power as a High-Risk System: Why We Have Not Had More TMIs–But Will Soon

- Shoddy construction and inadvertent errors, intimidation and actual deception—these are part and parcel of industrial life. No industry is without these problems, just as no valve can be made failure-proof. Normally, the consequences are not catastrophic. They may be, however, if you build systems with catastrophic potential. (Page 37)

- A bit more revealing is another discussion of seven “criticality” accidents. If plutonium, which is exceedingly volatile and hard to machine or handle, experiences the proper conditions, it can attain a self-sustaining fission chain reaction. Criticality depends upon the quantity of the plutonium, the size, shape, and material of the vessel that holds it, the nature of any solvents or dilutants, and even adjacent material, which may reflect neutrons back into the plutonium. It is apparently hard to know when these conditions might be just right. (Page 55)

Chapter 3: Complexity, Coupling, and Catastrophe

- But there are also degrees of disturbance to systems. The rally will not be disturbed in any perceptible way if I show up with a scratch on my ’57 De Soto, or if, ashamed of the state of my car, I do not go at all. But my system might be greatly disturbed if I stayed home rather than meeting or impressing people. The degree of disturbance, then, is related to what we define as the system. If the rally is the system under analysis, there is no accident. If that part of my life concerned with custom cars is the system, there is an accident. A steam generator tube failure in a nuclear plant can hardly be anything other than an accident for that plant and for that utility. Yet it may or may not have an appreciable effect upon the nuclear power “system” in the United States. (page 64)

- Most of the work concerned with safety and accidents deals, rightly enough, with what I call first-party victims, and to some extent second-party victims. But in this book we are concerned with third- and fourth-party victims. Briefly, first-party victims are the operators; second-party victims are nonoperating personnel or system users such as passengers on a ship; third-party victims are innocent bystanders; fourth-party victims are fetuses and future generations. Generally, as we move from operators to future generations, the number of persons involved rises geometrically, risky activities are less well compensated, and the risks taken are increasingly unknown ones. (Page 67)

- The following list presents these and adds the definition of the two types of accidents: component failure accidents and system accidents, which we will now take up. (Page 70)

- Systems are divided into four levels of increasing aggregation: units, parts, subsystems, and system.

- Incidents involved damage to or failures of parts or a unit only, even though the failure may stop the output of the system or affect it to the extent that it must be stopped.

- Accidents involve damage to subsystems or the system as a whole, stopping the intended output or affecting it to the extent that it must be halted promptly.

- Component failure accidents involve one or more component failures (part, unit, or subsystem) that are linked in an anticipated sequence.

- System accidents involve the unanticipated interaction of multiple failures.

- A system accident, in our definition, must have multiple failures, and they are likely to be in reasonably independent units or subsystems. But system accidents, as with all accidents, start with a component failure, most commonly the failure of a part, say a valve or an operator error. It is not the source of the accident that distinguishes the two types, since both start with component failures; it is the presence or not of multiple failures that interact in unanticipated ways. (Page 71)

- Incidents are overwhelmingly the most common untoward system events. Accidents are far less frequent. Among accidents, component failure accidents are far more frequent than system accidents. I have no reliable way to estimate these frequencies. For the systems analyzed in this book the richest body of data comes from the safety-related failures that nuclear plants in the United States are required to report. Roughly 3,000 Licensee Event Reports are filed each year by the 70 or so plants. Based upon the literature discussing these reports, I estimate 300 of the 3,000 events might be called accidents; 15 to 30 of these might be system accidents. (Page 71)

- Comment: This looks like a power-law relationship. If true, then it would be a nice way of indicating the frequencies of rarer types of accidents based on the more common types.

- The notion of baffling interactions is increasingly familiar to all of us. It characterizes our social and political world as well as our technological and industrial world. As systems grow in size and in the number of diverse functions they serve, and are built to function in ever more hostile environments, increasing their ties to other systems, they experience more and more incomprehensible or unexpected interactions. They become more vulnerable to unavoidable system accidents. (Page 72)

- Comment: The central premise of Stampede Theory: This emergent property of tightly coupled systems is not specific to a technology.

- These are “linear” interactions: production is carried out through a series or sequence of steps laid out in a line. It doesn’t matter much whether there are 1,000 or 1,000,000 parts in the line. It is easy to spot the failure and we know what its effect will be on the adjacent stations. There will be product accumulating upstream and incomplete product going out downstream of the failure point. Most of our planned life is organized that way. (Page 72)

- Comment: A linear system is a list of transformations. I think that is also the general form of a story, but not a game or a map. This means that linear systems in physical or belief space are inherently understandable. The trick in belief space is to determine what is important at what scale to achieve meaningful understanding.

- But what if parts, or units, or subsystems (that is, components) serve multiple functions? For example, a heater might both heat the gas in tank A and also be used as a heat exchanger to absorb excess heat from a chemical reactor. If the heater fails, tank A will be too cool for the recombination of gas molecules expected, and at the same time, the chemical reactor will overheat as the excess heat fails to be absorbed. This is a good design for a heater, because it saves energy. But the interactions are no longer linear. The heater has what engineers call a “common-mode” function—it services two other components, and if it fails, both of those “modes” (heating the tank, cooling the reactor) fail. This begins to get more complex. (Page 73)

- I will refer to these kinds of interactions as complex interactions, suggesting that there are branching paths, feedback loops, jumps from one linear sequence to another because of proximity and certain other features we will explore shortly. The connections are not only adjacent, serial ones, but can multiply as other parts or units or subsystems are reached. (Page 75)

- Comment: Modeled by graph Laplacians

- To summarize our work so far: Linear interactions are those interactions of one component in the DEPOSE system (Design, Equipment, Procedures, Operators, Supplies and materials, and Environment) with one or more components that precede or follow it immediately in the sequence of production. Complex interactions are those in which one component can interact with one or more other components outside of the normal production sequence, either by design or not by design. (Page 77)

- These problems exist in all industrial and transportation systems, but they are greatly magnified in systems with many complex interactions. This is because interactions, caused by proximity, common mode connections, or unfamiliar or unintended feedback loops, require many more probes of system conditions, and many more alterations of the conditions. Much more is simply invisible to the controller. The events go on inside vessels, or inside airplane wings, or in the spacecraft’s service module, or inside computers. Complex systems tend to have elaborate control centers not because they make life easier for the operators, saving steps or time, nor because there is necessarily more machinery to control, but because components must interact in more than linear, sequential ways, and therefore may interact in unexpected ways. (Page 82)

- Comment: Machine leaning takes this problem and scales it up to the point where humans can’t comprehend the interactions. Consider this abstract from December 10, 2018 in ArXiv: “As demonstration, we fit a 10-billion parameter “Bayesian Transformer” on 512 TPUv2 cores, which replaces attention layers with their Bayesian counterpart.“

- In complex systems, where not even a tip of an iceberg is visible, the communication must be exact, the dial correct, the switch position obvious, the reading direct and “on-line.” (Page 84)

- Comment: True for any stiff, dense system

- The problem of indirect or inferential information sources is compounded by the lack of redundancy available to complex systems. If we stopped to notice, we would observe that our daily life is full of missed or misunderstood signals and faulty information. A great deal of our speech is devoted to redundancy—saying the same thing over and over, or repeating it in a slightly different way. We know from experience that the person we are talking to may be in a different cognitive framework, framing our remarks to “hear” that which he expects to hear, not what he is being told. The listener suppresses such words as “not” or “no” because he doesn’t expect to hear them. Indeed, he does not “hear” them, in the literal sense of processing in his brain the sounds that enter the ear. All sorts of trivial misunderstandings, and some quite serious ones, occur in normal conversation. We should not be surprised, then, if ambiguous or indirect information sources in complex systems are subject to misinterpretation. (Page 84)

- Comment: Stephens shows this with fMRI

- accidents continue to plague transformation processes that are fifty years old. These are processes that can be described, but not really understood. They were often discovered through trial and error, and what passes for understanding is really only a description of something that works. (Page 85)

- Comment: This is also true with machine learning. We don’t know why these algorithms are so effective and what their limits are. Or even their points of greatest sensitivity.

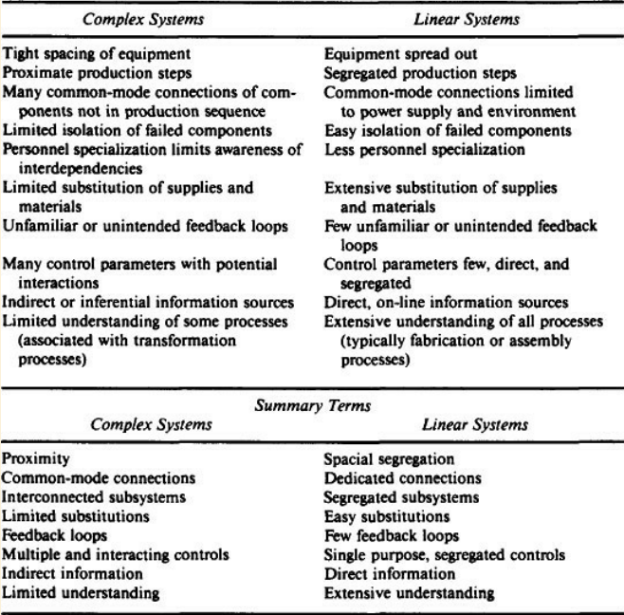

- To summarize, complex systems are characterized by (Page 86):

- Proximity of parts or units that are not in a production sequence;

- many common mode connections between components (parts, units, or subsystems) not in a production sequence;

- unfamiliar or unintended feedback loops;

- many control parameters with potential interactions;

- indirect or inferential information sources; and

- limited understanding of some processes.

- Linear systems lack the common-mode connections that require proximity. It is also a design criterion to separate various stages of production for sheer ease of maintenance access or replacement of equipment. Linear systems not only have spatial segregation of separate phases of production, but within production sequences the links are few and sequential, allowing damaged components to be pulled out with minimal disturbance to the rest of the system. (Page 86)

- Though I don’t want to claim a vast difference between employees in complex and linear systems, the latter appear to have fewer specialized and esoteric skills, allowing more awareness of interdependencies if they appear. The welder in a nuclear plant is more specialized (and specially rated), and presumably more isolated from other personnel, than the welder in a fabrication plant. Specialized personnel tend not to bridge the wide range of possible interactions; generalists, rather than specialists, are perhaps more likely to see unexpected connections and cope with them. (Page 87)

- Comment: Linear systems are lower dimension. In fact, what is called complex here is actually high dimension sometimes and truly complex at others. Feedback Loops are a sign of true complexity

(page 88)

(page 88)- Loosely coupled systems, whether for good or ill, can incorporate shocks and failures and pressures for change without destabilization. Tightly coupled systems will respond more quickly to these perturbations, but the response may be disastrous. Both types of systems have their virtues and vices. (Page 92)

- Loosely coupled systems are said to have “equifinality”—many ways to skin the cat; tightly coupled ones have “unifinality.” (Page 94)

- Comment: A tightly coupled system can only behave as a single individual, made up of tightly connected parts (a body). Loosely coupled systems behavior is more like populations. In humans (most animals?), the need for novelty provides velocity to the members of the population and is also a driver for coordination among systems that would otherwise be much more loosely coupled?

- Tightly coupled systems have little slack. Quantities must be precise; resources cannot be substituted for one another; wasted supplies may overload the process; failed equipment entails a shutdown because the temporary substitution of other equipment is not possible. No organization makes a virtue out of wasting supplies or equipment, but some can do so without bringing the system down or damaging it. In loosely coupled systems, supplies and equipment and human power can be wasted without great cost to the system. Something can be done twice if it is not correct the first time; one can temporarily get by with lower quality in supplies or products in the production line. The lower quality goods may have to be rejected in the end, but the technical system is not damaged in the meantime. (Page 94)

- In tightly coupled systems the buffers and redundancies and substitutions must be designed in; they must be thought of in advance. In loosely coupled systems there is a better chance that expedient, spur-of-the-moment buffers and redundancies and substitutions can be found, even though they were not planned ahead of time. (page 95)

- Comment: This is why diversity matters, it loosens the coupling. The question is to determine a) How much friction is needed for a given system, and b) what is the best way to inject diversity or other forms of friction.

- Tightly coupled systems are not completely devoid of unplanned safety devices. In two of the most famous nuclear plant accidents, Browns Ferry and TMI, imaginative jury-rigging was possible and operators were able to save the systems through fortuitous means. At TMI two pumps were put into service to keep the coolant circulating, even though neither was designed for core cooling. Subjected to intense radiation they were not designed to survive; one of them failed rather quickly, but the other kept going for days, until natural circulation could be established. Something more complex but similar took place at Browns Ferry. The industry claimed that the recovery proved that the safety features worked; but the designed-in ones did not work. (Page 95)

- The placement of systems is based entirely on subjective judgments on my part; at present there is no reliable way to measure these two variables, interaction and coupling. (Page 96)

- Comment: Graph Laplacians? The system is a network. That network can be tiny or huge. Characterizing The nodes and edges at scale is difficult.

Chapter 4: Petrochemical Plants

- Our discussion of nuclear power plants should make the following observations familiar enough. There was organizational ineptitude: they were knowingly short of engineering talent, and the chief engineer had left; there was a hasty decision on the by-pass, a failure to get expert advice, and most probably, strong production pressures. But as was noted in the case of TMI and other nuclear plants, and as will be apparent from other chapters, this is the normal condition for organizations; we should congratulate ourselves when they manage to run close to expectations. Had the pipe held out a short time longer until the reactor was repaired, and a new chief engineer been hired, and had a governmental inquiry board then come around, they could have concluded it was a well-run, safe plant. Once there is an accident, one looks for and easily finds the great causes for the great event. There were unheeded warning signals—the by-pass pipe had been noted to move up and down slightly during operation, surely an irregular bit of behavior; and there were unexplained anomalies in pressure and temperature and hydrogen consumption. But these were warnings only in retrospect. In these complex systems, minor warnings are probably always available for recall once there is an occasion, but if we shut down for every little thing… (Page 112)

- The report also faults the operators for not closely watching the indicators that monitored the flow of synthesis gas through the heater, which would have disclosed that something was wrong. But the report goes on to acknowledge that “the flow indicators were considered unreliable because there was hardly any indication of flow during both normal operations and in start-up conditions, especially when starting up both converters simultaneously,” as they were now doing. (Note this trivial interdependency of the two converters and their effect upon flow.) The operators, then, were blamed for not monitoring a flow that was so faint it could not be reliably measured. It hardly mattered in any case, since both flow indicators had been set incorrectly, and furthermore the alarms for them, indicating low flow, “had been disarmed since they caused nuisance problems during normal operations.”26 Small wonder, after all this, that the flow indicators “were not closely monitored.” (Page 116)

- High temperature alarms would occur, but the operators learned to ignore them. (Page 118)

- After the paper was presented, a discussant noted it is not the newness of the plant that is the problem. Even in the older plants, he said, “We struggle to control it -Runaways will take place and control by these caps is not the answer…. The way it is now we are in difficulties and I don’t think anybody is sophisticated enough to operate the plant safely.”[33] The problems in this mature, but increasingly sophisticated and high volume industry, appear to lie in the nature of the highly interactive, very tightly coupled system itself, not in any design or equipment deficiencies that humans might overcome. (Page 120)

Chapter 5: Aircraft and Airways

- Thirty-one of the first forty pilots were killed in action, trying to meet the schedules for business and government mail. It may be the first nonmilitary example of a phenomenon that will concern us in this chapter: production pressures in this high-risk system. Though nothing comparable exists today in commercial aviation, such pressures, are, as we shall see, far from negligible. (Page 125)

- Comment: Production pressure behaves as a global stiffener.

- Dependence upon multiple function units or subsystems was reduced by the segregation of the traffic (as well as by the use of transponders). More corridors were set up and restricted to certain kinds of flights. Small aircraft with low speeds (and without instrument flying equipment) were excluded from the altitudes where the fast jets fly (though controllers relent on this point, as we have just seen). Military flights were restricted to certain areas; parachutists were controlled. In this way, the system was made more linear. Of course, the density in any one corridor probably also has increased, offsetting the gain to some or even to a great degree. But if we could control for density, we would expect to find a decrease in unexpected interactions. (Page 159)

- Tight coupling reduces the ability to recover from small failures before they expand into large ones. Loose coupling allows recovery. In ATC processing delays are possible; aircraft are highly maneuverable and in three-dimensional space, so an airplane can be told to hold a pattern, to change course, slow down, speed up, or whatever. The sequence of landing or takeoff or insertion into a long-distance corridor is not invariant, though flexibility here certainly has its limits. The creation of more corridors reduced the coupling as well as the complexity of this system. Time constraints are still tight; the system is not loosely coupled, only moderately tightly coupled. But aircraft are maneuverable. They are also quite small. Near misses generally concern spaces of 200 feet to one mile. Those near misses reported to be under 100 feet are exceedingly rare and the proximity may be exaggerated. Even with 100 feet, though, there are 99 spare feet. If one tried, it would be hard to make two aircraft collide. In other high-risk systems it is comparatively easy to produce an explosion, or to defeat key safety systems and produce a core melt. (Page 160)

- Comment: The dimensions (degrees of freedom?) are different. In three dimensions, there is almost always a way out. I think this is why birds in flight never have events like terrestrial stampedes. Pilots can though, if the system is coupled tightly enough

- But in contrast to nuclear power plants and chemical plants (and recombinant DNA research), the system is not a transformation system, with hidden and poorly understood interactions that respond to indirect controls with indirect indicators. (An exception occurs in encountering buffet boundaries.) (Page 167)

Chapter 6: Marine Accidents

- The problem, it seems to me, lies in the type of system that exists. I will call it an “error-inducing” system; the configuration of its many components induces errors and defeats attempts at error reduction. (Page 172)

- Comment: I think social networks tend towards “error-inducing”

- The notion of an error-inducing system itself is derived from the complexity and coupling concepts. It sees some aspects as too loosely coupled (the insurance subsystem and shippers), others as too tightly coupled (shipboard organization); some aspects as too linear (shipboard organizations again, which are highly centralized and routinized), others as too complexly interactive (supertankers, and also the intricate interactions among marine investigations, courts, insurance agencies, and shippers). (Page 175)

- One major example, that of what I will call “noncollision course collisions,” will raise a problem we have encountered before, the social construction of reality, or building cognitive models of ambiguous situations. Why do two ships that would have passed in the night suddenly turn on one another and collide? (Page 176)

- The truckers do not want to use low gears and crawl down the other side of the Donner Summit, they want to go as fast as the turns and the highway patrol will allow them. Granted there are irresponsible drivers (irresponsible to themselves and their families as well as to third-party victims), just as all of us are irresponsible at times. But the work truckers do puts even irresponsible drivers in a situation where irresponsibility will have graver consequences than it does for most of us. Again, it is the system that must be analyzed, not the individuals. How should this system be designed to reduce the probability or limit the consequences of situations where irresponsibility can have an effect? (Page 180)

- Comment: My sense is that this is almost always a user-interface problem, where the interests of the larger population need to be factored in while still providing meaningful freedom (of choice?) to the individual.

- The encouragement of risk induces the owners and operators of the system to discard the elements of linearity and loose coupling that do exist, and to increase complexity and tight coupling. To some degree this discarding of intrinsic safety features occurs in all systems, but it appears to me to be far more prevalent in the marine system than in, say, nuclear power production and chemical production. I think the difference lies in the technological and environmental aspects of the system (fewer fixes available and a more severe environment), in its social organization (authority structure), and its catastrophic potential (which is less in the marine case, thus inviting less public intervention). (Page 187)

- Comment: Each one of these components is a different network of weights connecting the same nodes:

- Improvisational repairability

- Environment

- Social Organization

- Catastrophic potential

- Comment: Each one of these components is a different network of weights connecting the same nodes:

- We are led back to production pressures again. Even if CAS and radiotelephone communications (and inertial guidance systems, et cetera) would appear to reduce accidents in these studies, they also appear to increase speed and risk-taking, because the accident rate is growing steadily. Production pressures defeat the safety ends of safety devices and increase the pressures to use the devices to reduce operating expenses by going faster, or straighter; this makes the maritime system as a whole more complex (proximity, limited understanding) and tightly coupled (time dependent functions, limited slack). (Page 206)

- Comment: This is a really important externality that will have a huge impact in robotic systems where there is no need to consider another human. This could easily lead to a runaway condition that is completely invisible until too late. Sort of a more industrial, less dramatic SkyNet example.

- When we do dumb things in our car occasionally, we get an insight into how deck officers might do the same. Why do we, as drivers, or deck officers on ships, zig when we should have zagged, even when we are attentive and can see? I don’t know the many answers, but the following material will suggest that we construct an expected world because we can’t handle the complexity of the present one, and then process the information that fits the expected world, and find reasons to exclude the information that might contradict it. (Page 213)

- Comment: Is there neurological support for this?

- As drivers, we all would probably admit that at times we took unnecessary risks; but what we say to ourselves and others is, “I don’t know why; it was silly, stupid of me.” We generally do not do it because it was exciting. Finally, we cannot rule out exhaustion, or inebriation. Both exist. But neither are mentioned in the accident reports, and more important, from my own experience as a driver, skier, sailor, and climber, I know that I do inexplicable things when I am neither exhausted nor inebriated. So, in conclusion, I am arguing that constructing an expected world, while it begs many questions and leaves many things unexplained, at least challenges the easy explanations such as stupidity, inattention, risk taking, and inexperience. (Page 213)

- Comment: We do inexplicable things online as well, based on constructed, social reality.

- But what strikes me about this event is how well it exemplifies the easy way in which we can construct an interpretation of an ambiguous situation, process new information in the light of that interpretation—thus making the situation conform to our expectations—and, when distracted by other duties, make a last minute “correction” that fits with the private reality that no one else shares. (Page 217)

- Why would a ship in a safe passing situation suddenly turn and be impaled by a cargo ship four times its length? For the same reasons the operators of the TMI plant cut back on high pressure injection and uncovered the core. Confronted with ambiguous signals, the safest reality was constructed. In the above marine case this view of reality assumed that the other ship was not a head-on collision threat. (Page 217)

- Comment: I think that the constructed reality is the one that is most aligned with previous states that takes the least computation to construct. “Things will be fine” is always less computation than planning for catastrophe. And it’s usually the right answer. So why do some people spend their time exploring the space of potential catastrophes? I think this is explore/exploit management at the population level.

- As complicated as nuclear power and chemical plants are, the complexities are contained within the hardware and the human-machine interface. We may not grasp the functions of superdeheaters, but we know where they are and that they must function on call. In the aircraft and the airways systems, we let more of the environment in. It complicates the situation a bit. The operating envelope of air becomes a problem, as do thunderclouds, windshears, and white-outs. (Page 229)

- Comment: As long as there are no problems, nuclear reactors are a manufactured social construct, where the environmental reality (the core) is deeply hidden and ignorable. The weather is present and unavoidable. The environment helps to ground the aircraft systems.

- Marine transport appears to be an error-inducing system, where perverse interconnections defeat safety goals as well as operating efficiencies. Technological improvements did increase output but probably have helped increase accidents; with radar, the ship can go faster; when two ships have radar, they are even more likely to collide. The equivalent of CDTI (cockpit display of traffic information), which is to be introduced into the airliners, already exists at sea, and is useful only a small percent of the time, and may sometimes be counterproductive. Anyway, despite the increasingly sophisticated equipment, captains still inexplicably turn at the last minute and ram each other. We hypothesized that they built perfectly reasonable mental models of world, which work almost all the time, but occasionally turn out to be almost an inversion of what really exists. The authoritarian structure aboard ship, perhaps functional for simpler times, appears to be inappropriate for complex ships in complex situations. Yet it may be sustained by the shipping industry and the insurance industry who need to determine liability almost as much as they need to stem the increase in accidents. It is reinforced by the “technological fix” which says, “Just give the leader more information, more accurately and faster.” (Page 230)

Chapter 7: Earthbound Systems: Dams, Quakes, Mines and Lakes

- Overall comment: This chapter covers systems that are embedded in a large, external, mostly observable reality. This does seem to make it more difficult for a complex accident to occur.

- The U.S. Bureau of Mines now calls for multishaft ventilation and for segmented ventilation; this eliminates many common mode failures where the whole air supply is endangered by a collapse of one ventilation shaft or the failure of one set of fans. (Page 250)

- Comment: An example of legislated diversity injection. There is no idea if a particular ventilation shaft will fail, but the risk of all failing is much lower, since they are not meaningfully connected. The legislation doesn’t have to know about mining per se, it can look at human survival needs (exits, food, air, water) and demand that there are redundant, distributed sources for those.

- Both dams and mining, and certainly the underground mining and surface drilling that produced the Lake Peigneur accident, have alerted us to the possibility of ecosystem accidents—the unanticipated expansion of the system and thus the scope of failures. Systems not thought to be linked suddenly are. (Page 254)

- Large dams and reservoirs create a complex new environment, and very little is known of the mutual interactions of the component forces, on a long-term basis. (Page 255)

- Also applies to global climate forcing

Chapter 8: Exotics: Space, Weapons, and DNA

- The space missions illustrate that even where the talent and the funds are ample, and errors are likely to be displayed before a huge television audience, system accidents cannot be avoided. I have argued throughout this book that we should give all risky systems more quality control and training than we do, but also that where complexity and coupling lie, it will not be enough. We gave the space missions everything we had, but the system accidents still occurred. This is not a system with catastrophic potential; the victims are first-party victims. Catastrophic potential resides in most, but not all, complex and tightly coupled systems. (Page 257)

- The centerpiece of this part of the chapter will be an account of an extraordinary mission, Apollo 13, which commenced with a system accident, and ended with a recovery that dramatically illustrates the most exemplary attributes of both humans and their machines. The event will tell us something further about complexity and coupling: the recovery was possible because ground controllers were able to make the system more linear and more loosely coupled, and to put the operators back into the control loop that rarely included them. (Page 257)

- because of the safety systems involved in a launch-on-warning scenario, it is virtually impossible for well-intended actions to bring about an accidental attack (malevolence or derangement is something else). In one sense this is not all that comforting, since if there were a true warning that the Russian missiles were coming, it looks as if it would also be nearly impossible for there to be an intended launch, so complex and prone to failure is this system. It is an interesting case to reflect upon: at some point does the complexity of a system and its coupling become so enormous that a system no longer exists? Since our ballistic weapons system has never been called upon to perform (it cannot even be tested), we cannot be sure that it really constitutes a viable system. (Page 258)

- In Chapter 2, I argued that every industrial activity exhibits organizational failures, incompetence, greed, and some criminality. (Page 258)

- Comment: And this needs to be factored into any design , particularly the design of complex systems

- The search for the problem was conducted with the well-worn assumption we have been exploring in this book: since the system is safe, or I wouldn’t be here, it must be a minor problem, or the lesser of two possible evils. Just as reactor cores had never been uncovered before, or that ship would never turn this way, or they would never set my course to hit a mountain, the idea that the heart of the spaceship might be broken was inconceivable, particularly for the managers and designers in Houston, all gathered together for the historic flight. Cooper puts it well: (Page 274)

- …they felt secure in the knowledge that the spacecraft was as safe a machine for flying to the moon as it was possible to devise. Obviously, men would not be sent into space in anything less, and inasmuch as men were being sent into space, the pressure around NASA to have confidence in the spacecraft was enormous. Everyone placed particular faith in the spacecraft’s redundancy: there were two or more of almost everything.

- Comment: “…almost..“. The complex behavior forms around the stiff links. This can be modeled, I think.

- The astronauts felt a jolt and this remained an important part of their analysis throughout the process of trying to track down the problem. (Page 277)

- Comment: Environmental awareness as another, non-social information channel

- The accident allows us to review some typical behavior associated with system accidents:

- initial incomprehension about what was indeed failing;

- failures are hidden and even masked;

- a search for a de minimus explanation, since a de maximus one is inconceivable;

- an attempt to maintain production if at all possible;

- mistrust of instruments, since they are known to fail;

- overconfidence in ESDs and redundancies, based upon normal experience of smooth operation in the past;

- ambiguous information is interpreted in a manner to confirm initial (de minimus) hypotheses;

- tremendous time constraints, in this case involving not only the propagation of failures, but the expending of vital consumables; and

- invariant sequences, such as the decision to turn off a subsystem that could not be restarted.

- All this did not just take place with a few high-school graduates with some drilling in reactor procedures, or a crusty old sea captain isolated in his absolute authority, but happened with three brilliant and extremely well-trained test pilots and a gaggle of managers (scientists and engineers all) backed up by the “Great Designers” themselves, all working shifts in Houston and wired to the spacecraft. (page 278)

- In addition, almost every step they devised could be quickly and realistically tested in a very sophisticated simulator before it was tried out in the capsule—something rarely available to high-risk systems. (Page 278)

- Comment: In addition to diversity, simulators that afford exploring a space should be a requirement of resilient systems

- With multiple and independent sources of information, the more detailed the information, the more unlikely an error. (Page 289)

- Comment: This is a key aspect of diversity injection: diverse, easy to test bits of information that build a larger world view. With respect to the mineshaft legislation earlier, it’s easy to see if ventilation is coming from a shaft.

- With ecosystem accidents the risk cannot be calculated in advance and the initial event—which usually is not even seen as a component failure at all—becomes linked with other systems from which it was believed to be independent. The other systems are not part of any expected production sequence. The linkage is not only unexpected but once it has occurred it is not even well understood or easily traced back to its source. Knowledge of the behavior of the human-made material in its new ecological niche is extremely limited by its very novelty. Ecosystem accidents illustrate the tight coupling between human made systems and natural systems. There are few or no deliberate buffers inserted between the two systems because the designers never expected them to be connected. At its roots, the ecosystem accident is the result of a design error, namely the inadequate definition of system boundaries. (Page 296)

Chapter 9: Living with High-Risk Systems

- Ultimately, the issue is not risk, but power; the power to impose risks on the many for the benefit of the few. (Page 306)

- When societies confront a new or explosively growing evil, the number of risk assessors probably grows—whether they are shamans or scientists. (Page 307)

- heroic efforts would be needed to educate the general public in the skills needed to decide complex issues of risk. At the basis of this is a quarrel about forms of rationality in human affairs. (Page 315)

- Comment: A good place for games and simulations?

- It is convenient to think of three forms of rationality: absolute rationality, which is enjoyed primarily by economists and engineers; “bounded” or limited rationality, which a growing wing of risk assessors emphasize; and what I will call social and cultural rationality, which is what most of us live by, although without thinking that much about it. (Page 315)

- Comment: These map roughly onto my groupings, though “Absolute” would be “environmental”

- Absolute rationality – Nomadic

- Bounded rationality – Flocking

- Socio-cultural rationality – Stampede

- Comment: These map roughly onto my groupings, though “Absolute” would be “environmental”

- One important and unintended conclusion that does come from this work is the overriding importance of the context into which the subject puts the problem. Recall our nuclear power operators, or the crew of the New Zealand DC-10 on its sightseeing trip, or the mariners interpreting ambiguous signals. The decisions made in these cases were perfectly rational; it was just that the operators were using the wrong contexts. Selecting a context (“this can happen only with a small pipe break in the secondary system”) is a pre-decision act, made without reflection, almost effortlessly, as a part of a stream of experience and mental processing. We start “thinking” or making “decisions” based upon conscious, rational effort only after the context has become defined. And defining the context is a much more subtle, self-steering process, influenced by long experience with trials and errors (much as the automatic adjustments made in driving or walking on a busy street). If a situation is ambiguous, without thinking about it or deciding upon it, we sometimes pick what seems to be the most familiar context, and only then do we begin to consciously reason. This is what appears to happen in a great many of the psychological experiments. Without conscious thought (the kind that can be easily and fairly accurately recalled), the subject says, “This is like x: I will do what I usually do then.” The results of these experiments strongly suggest that the context supplied by the subjects is not the context the experimenter expected them to supply. With an ill-defined context, the subject of the experiment may say, “Oh, this is like situation A in real life, and this is what I generally do,” while the experimenter thinks that the subject is assuming it is like situation B in real life, and is surprised by what the subject does. (Page 318)

- Comment: This aligns with ‘Conflict and Consensus’ and is a form of dimension reduction

- Finally, heuristics are akin to intuitions. Indeed, they might be considered to be regularized, checked-out intuitions. An intuition is a reason, hidden from our consciousness, for certain apparently unrelated things to be connected in a causal way. Experts might be defined as people who abjure intuitions; it is their virtue to have flushed out the hidden causal connections and subjected them to scrutiny and testing, and thus discarded them or verified them. Intuitions, then, are especially unfortunate forms of heuristics, because they are not amenable to inspection. This is why they are so fiercely held even in the face of contrary evidence; the person insists the evidence is irrelevant to their “insight.” (Page 319)

- Comment: A nice distinction between Nomad and Stampede framings

- Finally, bounded rationality is efficient because it avoids an extensive amount of effort. For the citizen, think of the work that could be required to decide just what the TMI accident signified. In the experts’ view, the public should make the effort to answer the following questions, and if they can’t, should accept the experts’ answers. Did the accident fit in the technical fault tree analysis the experts constructed in WASH-1400, the Rasmussen Report (a matter of several volumes of technical writing)? How many times in the past have we come close to an accident of this type? Can we correct the system and thus learn from the accident? Was it accurately reported? Do the experts agree on what happened? Did it fit the base rate of events that led to the prediction that it was a very rare event? And so on. The experts do not have answers to some of these questions, so the public, even were they to devote some months of study to the problem, could not be assured that the answer could be known. (Page 320)

- Comment: Intelligence is computation, and expensive.

- The most important factor, which they labeled “dread risk,” was associated with (Page 326):

- lack of control over the activity;

- fatal consequences if there were a mishap of some sort;

- high catastrophic potential;

- reactions of dread;

- inequitable distribution of risks and benefits (including the transfer of risks to future generations), and

- the belief that risks are increasing and not easily reducible.

- Comment: These clusters are a form of dimension reduction that is emerges spontaneously in a human population. Perceived risk: psychological factors and social implications

- The dimension of dread—lack of control, high fatalities and catastrophic potential, inequitable distribution of risks and benefits, and the sense that these risks are increasing and cannot be easily reduced by technological fixes—clearly was the best predictor of perceived risk. This is what we might call, after Clifford Geertz, a “thick description” of hazards rather than a “thin description.” A thin one is quantitative, precise, logically consistent, economical, and value-free. It embraces many of the virtues of engineering and the physical sciences, and is consistent with what we have called component failure accidents—failures that are predictable and understandable and in an expected production sequence. A thick description recognizes subjective dimensions and cultural values and, in this example, shows a skepticism about human-made systems and institutions, and emphasizes social bonding and the tentative, ambiguous nature of experience. A thick description reflects the nature of system accidents, where unanticipated, unrecognizable interactions of failures occur, and the system does not allow for recovery. (Page 328)

- Comment: The Interpretation of Cultures

- But it is fair to ask whether we have progressed enough as a species to handle the more immediate, short-term problems of DNA, chemical plants, nuclear plants, and nuclear weapons. Recall the major thesis of this book: systems that transform potentially explosive or toxic raw materials or that exist in hostile environments appear to require designs that entail a great many interactions which are not visible and in expected production sequence. Since nothing is perfect—neither designs, equipment, operating procedures, operators, materials, and supplies, nor the environment—there will be failures. If the complex interactions defeat designed-in safety devices or go around them, there will be failures that are unexpected and incomprehensible. If the system is also tightly coupled, leaving little time for recovery from failure, little slack in resources or fortuitous safety devices, then the failure cannot be limited to parts or units, but will bring down subsystems or systems. These accidents then are caused initially by component failures, but become accidents rather than incidents because of the nature of the system itself; they are system accidents, and are inevitable, or “normal” for these systems. (Page 330)

- Accidents will be avoided if the system is also loosely coupled, (cell 4, universities and R&D units) because loose coupling gives time, resources, and alternative paths to cope with the disturbance and limits its impact. (Page 331)

- For organizations that are both linear and loosely coupled (cell 3; most manufacturing, and single goal agencies such as a state liquor authority, vehicle registration, or licensing bureau) centralization is feasible because of the linearity, but decentralization is feasible because of the loose coupling. Thus, these organizations have a choice, insofar as organizational structure affects recovery from inevitable failures. The fact that most have opted for centralized structures says a good bit about the norms of elites who design these systems, and perhaps subtle matters such as the “reproduction of the class system” (keeping people in their place). Organizational theorists generally find such organizations overcentralized and recommend various forms of decentralization for both productivity and social rationality reasons. (Page 334)

- Comment: Designed in inefficiently is good for preventing accidents but bad for profit

- Organizational theorists have long since given up hope of finding perfect or even exceedingly well-run organizations, even where there is no catastrophic potential. It is an enduring limitation—if it is a limitation—of our human condition. It means that humans do not exist to give their all to organizations run by someone else, and that organizations inevitably will be run, to some degree, contrary to their interests. This is why it is not a problem of “capitalism”; socialist countries, and even the ideal communist system, cannot escape the dilemmas of cooperative, organized effort on any substantial scale and with any substantial complexity and uncertainty. At some point the cost of extracting obedience exceeds the benefits of organized activity. (Page 338)

- Comment: First point: should we expect any better of machine organizations? Why? Second point: These are curves that cross in a fuzzy way that incorporate short term profit and long term sustainability.

- What, then, of a less ambitious explanation than capitalism, namely greed (whether due to human nature or structural conditions)—or to put it more analytically, private gain versus the public good? (Page 340)

- Comment: There is something here. It is not anyone’s private gain, but the gain of the powerful, the amplified. The ability of one person to have the effect of a stampede. In capitalism, this can be achieved using money. In other systems it’s birth, luck, access to power, etc. It can be very good for the few to drive the many over the cliff…

- Systems that have high status, articulate, and resource-endowed operators are more likely to have the externalities brought to public attention (thus pressuring the system elites) than those with low-status, inarticulate, and impoverished operator groups. To protect their own interests, the operators in the first category can argue the public interest, since both operators and the public will suffer from externalities. The contrast here runs from airline pilots at the favorable extreme, through chemical plant operators, then nuclear plant operators, to miners—the weakest group. (Page 342)

- Comment: We may only get one 10,000-car pileup. But think about what’s going on in the ML transformation of the justice system

- The main point of the book is to see these human constructions as systems, not as collections of individuals or representatives of ideologies. From our opening accident with the coffeepot and job interview through the exotics of space, weapons, and microbiology, the theme has been that it is the way the parts fit together, interact, that is important. The dangerous accidents lie in the system, not in the components. The nature of the transformation processes elude the capacities of any human system we can tolerate in the case of nuclear power and weapons; the air transport system works well—diverse interests and technological changes support one another; we may worry much about the DNA system with its unregulated reward structure, less about chemical plants; and though the processes are less difficult and dangerous in mining and marine transport, we find the system of each is an unfortunate concatenation of diverse interests at cross-purposes. (Page 351)

- Comment: Systems are directed networks. But then ideologies are too. They run at different scales though.

- These systems are human constructions, whether designed by engineers and corporate presidents, or the result of unplanned, unwitting, crescive, slowly evolving human attempts to cope. (Page 351)

- Comment: These are the kinds of systems that partially informed agent make. They are mechanisms that populations use to make decisions

- The catastrophes send us warning signals. This book has attempted to decode these signals: abandon this, it is beyond your capabilities; redesign this, regardless of short-run costs; regulate this, regardless of the imperfections of regulation. But like the operators of TMI who could not conceive of the worst—and thus could not see the disasters facing them—we have misread these signals too often, reinterpreting them to fit our preconceptions. (Page 351)

Afterward:

- But a little reflection on Bhopal led me to invert “Normal Accident Theory” in order to see how this tragedy was possible. A few hundred plants with this catastrophic potential could quietly exist for forty years without realizing their potential because it takes just the right combination of circumstances to produce a catastrophe, just as it takes the right combination of inevitable errors to produce an accident. I think this deserves to be called the “Union Carbide factor.” (Page 356)

- Comment: Power law again?

- It is hard to have a catastrophe; everything has to come together just right (or wrong). When it does we have “negative synergy.” Since catastrophes are rare, elites, I conclude, feel free to populate the earth with these kinds of risky systems. (Page 358)

- Comment: There is some level of implicit tradeoff in bringing incompletely understood technologies to market. “move fast and break things” is an effective way to get past the valley of death as fast as possible – i.e. “Production Pressure”

- Both our predictions about the possibilities of accidents and our explanations of them after they occur are profoundly compromised by our act of “social construction.” We do not know what to look for in the first place, and we jump to the most convenient explanation (culture, or bad conditions) in the second place. If an accident has occurred, we will find the most convenient explanations. (Page 359)

- Comment: “Rush to judgement” has a long history. From the OED – Ld. Erskine Speeches Misc. Subj. (1812) 7 “An attack upon the King is considered to be patricide against the state, and the Jury and the witnesses, and even the Judges, are the children. It is fit, on that account, that there should be a solemn pause before we rush to judgment.”

- Were we to perform a thought experiment, in which we would go into a plant that was not having an accident but assume there had just been one, we would probably find “an accident waiting to happen” (Page 359)

- Comment: Pre-mortems are a thing. Should they be used more often?

- “We might postulate a notion of Organizational Cognitive Complexity,” he writes, “i.e., that the sheer number of nodes and states to be monitored exceeds the organizational capacity to anticipate or comprehend. Complexity Indices for major refinery unit processes varied from 156,000,000 for alkylation/polymerization down to 90 for crude refining. The very use of a logarithmic scale to classify complexity suggests the cognitive challenges facing an organization.” A single component failure thus produces other failures in a (temporarily) incomprehensible manner if the complexity of the system is cognitively challenging. Berniker adds another significant point in his letter: the complexity of the system may not be necessary from a technical point of view, but is mostly the result of poorly planned accretions. (Page 363)

- More valuable still is Sagan’s development of two organizational concepts that were important for my book: the notion of bounded rationality (the basis of “Garbage Can Theory”), and the notion of organizations as tools to be used for the interests of their masters (the core of a “power theory”). Both garbage can and power theory are noted in passing in my book, but Sagan’s work makes both explicit tools of analysis. Risky systems, I should have stressed more than I did, are likely to have high degrees of uncertainty associated with them and thus one can expect “garbage can” processes, that is, unstable and unclear goals, misunderstanding and mis-learning, happenstance, and confusion as to means. (For the basic work on garbage can theory, see Cohen, March, and Olsen 1988; March and Olsen 1979. For a brief summary, see Perrow 1986, 131–154.) A garbage can approach is appropriate where there is high uncertainty about means and goals and an unstable environment. It invites a pessimistic view of such things as efficiency or commitments to safety. (Page 369)

- few managers are punished for not putting safety first even after an accident, but will quickly be punished for not putting profits, market share, or agency prestige first. (Page 370)

- Second, why doesn’t more learning take place? One answer is provided by the title of an interesting piece: “Learning from mistakes is easier said than done …” (Edmonson 1996) in which Edmonson discusses the ample group process barriers to admitting and learning from mistakes. James Reason offers another colorful and more elaborate discussion (1990). Another is that elites do learn, but the wrong things. They learn that disasters are rare and they are not likely to be vulnerable so, in view of the attractions of creating and running risky systems the benefits truly do outweigh the risks for individual calculators. This applies to lowly operators, too; most of the time cutting corners works; only rarely does it “bite back,” in the wonderful terminology that Tenner uses in his delightful book of accident illustrations, though sometimes it will (Tenner 1996). (Page 371)

- There are important system characteristics that determine the number of inevitable (though often small) failures which might interact unexpectedly, and the degree of coupling which determines the spread and management of the failures. Some of these are: (page 371)

- Experience with operating scale—did it grow slowly, accumulating experience (fossil fuel utility plants) or rapidly with no experience of the new configurations or volumes (nuclear power plants)?

- Experience with the critical phases—if starting and stopping are the risky phases, does this happen frequently (take offs and landings) or infrequently (nuclear plant outages)?

- Information on errors—is this shared within and between the organizations? (Yes, in the Japanese nuclear power industry; barely in the U.S.) Can it be obtained, as in air transport, or does it go to the bottom with the ship?

- Close proximity of elites to the operating system—they fly on airplanes but don’t ship on rusty bottoms or live next to chemical plants.

- Organizational control over members—solid with the naval carriers, partial with nuclear defense, mixed with marine transport (new crews each time), absent with chemical and nuclear plants (and we are hopefully reluctant to militarize all risky systems).

- Organizational density of the system’s environment (vendors, subcontractors, owner’s/operator’s trade associations and unions, regulators, environmental groups, and competitive systems)—if rich, there will be persistent investigations and less likelihood of blaming God or the operators, and more attempts to increase safety features.

- When the Exxon Valdez accident occurred Clarke took these insights with him to Alaska, where he came upon a truly mammoth social construction, the several contingency plans put out by the oil industry for cleaning up 80 to 90 percent of any spill, plans that necessitated getting permission to operate in the Sound. The plans were so fanciful that it was hard to take them seriously. There was no case on record of a substantial cleanup of a large spill, yet industry and government regulators promised just that. There was such a disjuncture between what they said they could do and what they actually could do that Clarke saw the plans as symbols rather than blueprints, as “fantasy documents.” (Page 374)

- Comment: This is an attempt to represent a social reality as a physical one

- Snook makes another contribution. This is, he says, a normal accident without any failures. How can that be? Well, there were minor deviations in procedures that saved time and effort or corrected some problems without disclosing potential ones. Organizations and large systems probably could not function without the lubricant of minor deviations to handle situations no designer could anticipate. Individually they were inconsequential deviations, and the system had over three years of daily safe operations. But they resulted in the slow, steady uncoupling of local practice—the jets, the AWACS, the army helicopters—from the written procedures, a practice he labels “practical drift.” Local adaptation, he says, can lead to large system disaster. The local adaptations are like a fleet of boats sailing to a destination; there is continuous, intended adjustment to the wind and the movement of nearby boats. The disaster comes when, only from high above the fleet, or from hindsight, we see that the adaptive behavior imperceptibly and unexpectedly result in the boats “drifting” into gridlock, collision, or grounding. (Page 378)

- Instead, she (Diane Vaughn) builds what would be called a “social construction of reality” case that allowed the banality of bureaucracy to create a habit of normalizing deviations from safe procedures. They all did what they were supposed to do, and were used to doing, and the deviations finally caught up with them. (Page 380)

- this was not the normalization of deviance or the banality of bureaucratic procedures and hierarchy or the product of an engineering “culture”; it was the exercise of organizational power. We miss a great deal when we substitute culture for power. (Page 380)

- Comment: One thing that I haven’t really thought about is the role of power in forcing a stampede. There may be herding aspects of a “rush to judgement”

- New financial instruments such as derivatives and hedge funds and new techniques such as programmed trading further increase the complexity of interactions. Breaking up a loan on a home into tiny packages and selling them on a world-wide basis increases interdependency. (Page 385)

- Comment: This was written in 1999!

- But another condition of interdependency is not a bunch of pipes with tight fittings and one direction of flow, but a web of connections wherein some pathways are multipurpose, some might not be used, some reversed, some used in unforeseen ways, some spilling excess capacity, and some buffering and isolating disturbances. This is a more “organic” conception of interdependency, consistent with loose coupling. The distinction is an old one in sociology, going back at least to Emile Durkheim (mechanical and organic solidarity) and Ferdinand Toennies (society versus community) and finding echos in most major sociological thinkers. Predictions follow freely. External threats are supposed to heighten organic solidarity, internal economic downturns, mechanical solidarity. Modernization and bureaucracy find their rationale and techniques in mechanical coupling; communitarian, religious, and cult outbreaks find their rationale in organic coupling and solidarity. (Page 392)

- This points to the basic difference between 1) software problems—billions of lines of code in forgotten programs in computers that have not been manufactured for ten years—which is bad enough, and 2) the embedded chip problem—billions of chips that are in controllers, rheostats, pumps, valves, regulators, safety systems and so on, which are hard-wired and must be taken out and replaced. (Page 401)