- Robert Axelrod is the Walgreen Professor for the Study of Human Understanding at the University of Michigan. He has appointments in the Department of Political Science and the Gerald R. Ford School of Public Policy. He is best known for his interdisciplinary work on the evolution of cooperation which has been cited more than 30,000 times. His current research interests include international security and sense-making.

Quick Takeaway

- One of the first computer analysis of using computers to analyze game theory, in this case the Iterated Prisoner’s Dilemma (IPD). The book covers a playoff between contributed algorithms for the IPD, where the victor, surprisingly, was TIT-FOR-TAT (TFT). TFT simply responds back with the last response from the other player, and won out of considerably more complicated algorithms.

- Based on the outcome of the first playoff, a second competition was held. TFT won this competition as well. The book spends most of its time either bringing the reader up to speed on the IPD or discussing the ramifications. The main point of the book is the surprising robustness of TFT, as examined in a variety of contexts.

- A thing that strikes me is that once a TFT successfully takes over, then it becomes computationally easier to ALWAYS-COOPERATE. That could evolve to become dominant and be completely vulnerable to ALWAYS-DEFECT

Notes

- By the final chapter, the discussion has developed from the study of the emergence of cooperation among egoists without central authority to an analysis of what happens when people actually do care about each other and what happens when there is central authority. But the basic approach is always the same: seeing how individuals operate in their own interest reveals what happens to the whole group. This approach allows more than the understanding of the perspective of a single player. It also provides an appreciation of what it takes to promote the stability of mutual cooperation in a given setting. The most promising finding is that if the facts of Cooperation Theory are known by participants with foresight, the evolution of cooperation can be speeded up. (Page 24)

- A computer tournament for the study of effective choice in the iterated Prisoner’s Dilemma meets these needs. In a computer tournament, each entrant writes a program that embodies a rule to select the cooperative or noncooperative choice on each move. The program has available to it the history of the game so far, and may use this history in making a choice. If the participants are recruited primarily from those who are familiar with the Prisoner’s Dilemma, the entrants can be assured that their decision rule will be facing rules of other informed entrants. Such recruitment would also guarantee that the state of the art is represented in the tournament. (Page 30)

- The sample program sent to prospective contestants to show them how to make a submission would in fact have won the tournament if anyone had simply clipped it and mailed it in! But no one did. The sample program defects only if the other player defected on the previous two moves. It is a more forgiving version of TIT FOR TAT in that it does not punish isolated defections. The excellent performance of this TIT FOR TWO TATS rule highlights the fact that a common error of the contestants was to expect that gains could be made from being relatively less forgiving than TIT FOR TAT, whereas in fact there were big gains to be made from being even more forgiving. The implication of this finding is striking, since it suggests that even expert strategists do not give sufficient weight to the importance of forgiveness. (Page 39)

- The second round was also a dramatic improvement over the first round in sheer size of the tournament. The response was far greater than anticipated. There was a total of sixty-two entries from six countries. The contestants were largely recruited through announcements in journals for users of small computers. The game theorists who participated in the first round of the tournament were also invited to try again. The contestants ranged from a ten-year-old computer hobbyist to professors of computer science,.physics, economics, psychology, mathematics, sociology, political science, and evolutionary biology. The countries represented were the United States, Canada, Great Britain, Norway, Switzerland, and New Zealand. (Page 41)

- A nice argument for and example of diversity.

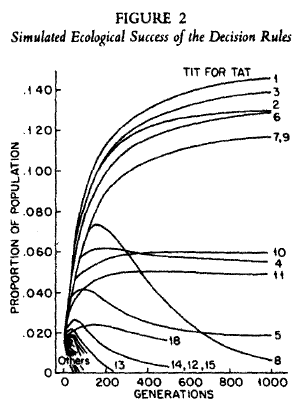

- A good way to examine this question is to construct a series of hypothetical tournaments, each with a very different distribution of the types of rules participating. The method of constructing these drastically modified tournaments is explained in appendix A. The results were that TIT FOR TAT won five of the six major variants of the tournament, and came in second in the sixth. This is a strong test of how robust the success of TIT FOR TAT really is. (Page 48)

- This simulation provides an ecological perspective because there are no new rules of behavior introduced. It differs from an evolutionary perspective, which would allow mutations to introduce new strategies into the environment. In the ecological perspective there is a changing distribution of given types of rules. The less successful rules become less common and the more successful rules proliferate. (Page 51)

- This approach imagines the existence of a whole population of individuals employing a certain strategy, and a single mutant individual employing a different strategy. The mutant strategy is said to invade the population if the mutant can get a higher payoff than the typical member of the population gets. Put in other terms, the whole population can be imagined to be using a single strategy, while a single individual enters the population with a new strategy. The newcomer will then be interacting only with individuals using the native strategy. Moreover, a native will almost certainly be interacting with another native since the single newcomer is a negligible part of the population. Therefore a new strategy is said to invade a native strategy if the newcomer gets a higher score with a native than a native gets with another native. Since the natives are virtually the entire population, the concept of invasion is equivalent to the single mutant individual being able to do better than the population average. This leads directly to the key concept of the evolutionary approach. A strategy is collectively stable if no strategy can invade it. (Page 56)

- We need to be careful here as this is a very simple fitness test. Add more conditions, like computational cost, and the fitness landscape becomes multidimensional and potentially more rugged.

- Proposition 2. TIT FOR TAT is collectively stable if and only if, w is large enough. This critical value of w is a function of the four payoff parameters, T (temptation), R (reward), P (punishment), and S (sucker’s) . The significance of this proposition is that if everyone in a population is cooperating with everyone else because each is using the TIT FOR TAT strategy, no one can do better using any other strategy providing that the future casts a large enough shadow onto the present. In other words, what makes it impossible for TIT FOR TAT to be invaded is that the discount parameter, w, is high enough relative to the requirement determined by the four payoff parameters. For example, suppose that T=S, R=3, P= 1, and S=O as in the payoff matrix shown in figure 1. Then TIT FOR TAT is collectively stable if the next move is at least 2/3 as important as the current move. Under these conditions, if everyone else is using TIT FOR TAT, you can do no better than to do the same, and cooperate with them. On the other hand, if w falls below this critical value, and everyone else is using TIT FOR TAT, it will pay to defect on alternative moves. If w is less than 1/2, it even pays to always defect. (Page 59)

- From Wikipedia: If both players cooperate, they both receive the reward R for cooperating. If both players defect, they both receive the punishment payoff P. If Blue defects while Red cooperates, then Blue receives the temptation payoff T, while Red receives the “sucker’s” payoff, S. Similarly, if Blue cooperates while Red defects, then Blue receives the sucker’s payoff S, while Red receives the temptation payoff T.

- One specific implication is that if the other player is unlikely to be around much longer because of apparent weakness, then the perceived value of w falls and the reciprocity of TIT FOR TAT is no longer stable. We have Caesar’s explanation of why Pompey’s allies stopped cooperating with him. “They regarded his (Pompey’s] prospects as hopeless and acted according to the common rule by which a man’s friends become his enemies in adversity” (Page 59)

- Proposition 4. :For a nice strategy to be collectively stable, it must be provoked by the very first defection of the other player. The reason is simple enough. If a nice strategy were not provoked by a defection on move n, then it would not be collectively stable because it could be invaded by a rule which defected only on move n. (Page 62)

- Many of the benefits sought by living things are disproportionately available to cooperating groups. While there are considerable differences in what is meant by the terms “benefits” and “sought,” this statement, insofar as it is true, lays down a fundamental basis for all social life. The problem is that while an individual can benefit from mutual cooperation, each one can also do even better by exploiting the cooperative efforts of others. Over a period of time, the same individuals may interact again, allowing for complex patterns of strategic interactions. As the earlier chapters have shown, the Prisoner’s Dilemma allows a formalization of the strategic possibilities inherent in such situations. (Page 92)

- And how groups are structured and connected affect this tremendously.

- Non-zero-sum games, such as the Prisoner’s Dilemma, are not like this. Unlike the clouds, the other player can respond to your own choices. And unlike the chess opponent, the other player in a Prisoner’s Dilemma should not be regarded as someone who is out to defeat you. The other player will be watching your behavior for signs of whether you will reciprocate cooperation or not, and therefore your own behavior is likely to be echoed back to you. (Page 121)

- There is a fundamental difference between interacting with the external environment. The environment is somewhat immutable, and the individual must adapt to it. This adaptation can be simple (don’t jump off the cliff) or complicated (free-climbing the same cliff). The more complicated the adaptation, the more difficult it is for the agent to do anything else. Each problem we encounter, social or environmental has a cognitive cost that we pay out of our limited cognitive budget.

- Having a firm reputation for using TIT FOR TAT is advantageous to a player, but it is not actually the best reputation to have. The best reputation to have is the reputation for being a bully. The best kind of bully to be is one who has a reputation for squeezing the most out of the other player while not tolerating any defections at all from the other. The way to squeeze the most out of the other is to defect so often that the other player just barely prefers cooperating all the time to defecting all the time. And the best way to encourage cooperation from the other is to be known as someone who will never cooperate again if the other defects even once. (Page 152)

- This is the most interesting description of Donald Trump that I have ever read.

- The “neighbors” of this soft drink are other drinks on the market with a little more or less sugar, or a little more or less caffeine. Similarly, a Political candidate might take a position on a liberal/conservative dimension and a position on an internationalism/isolationism dimension. If there are many candidates vying with each other in an election, the “neighbors” of the candidate are those with similar positions. Thus territories can be abstract spaces as well as geographic spaces. (Page 159)

- Look! Belief spaces!